Computer vision

Encyclopedia

Computer vision is a field that includes methods for acquiring, processing, analysing, and understanding images and, in general, high-dimensional data from the real world in order to produce numerical or symbolic information, e.g., in the forms of decisions. A theme in the development of this field has been to duplicate the abilities of human vision by electronically perceiving and understanding an image . This image understanding can be seen as the disentangling of symbolic information from image data using models constructed with the aid of geometry, physics, statistics, and learning theory .

Applications range from tasks such as industrial machine vision

systems which, say, inspect bottles speeding by on a production line, to research into artificial intelligence and computers or robots that can comprehend the world around them. The computer vision and machine vision fields have significant overlap. Computer vision covers the core technology of automated image analysis which is used in many fields. Machine vision usually refers to a process of combining automated image analysis with other methods and technologies to provide automated inspection and robot guidance in industrial applications.

As a scientific discipline, computer vision is concerned with the theory behind artificial systems that extract information from images. The image data can take many forms, such as video sequences, views from multiple cameras, or multi-dimensional data from a medical scanner.

As a technological discipline, computer vision seeks to apply its theories and models to the construction of computer vision systems. Examples of applications of computer vision include systems for:

Sub-domains of computer vision include scene reconstruction, event detection, video tracking

, object recognition

, learning, indexing, motion estimation

, and image restoration

.

In most practical computer vision applications, the computers are pre-programmed to solve a particular task, but methods based on learning are now becoming increasingly common.

deal with autonomous planning or deliberation for robotical systems to navigate through an environment. A detailed understanding of these environments is required to navigate through them. Information about the environment could be provided by a computer vision system, acting as a vision sensor and providing high-level information about the environment and the robot.

Artificial intelligence and computer vision share other topics such as pattern recognition

and learning techniques. Consequently, computer vision is sometimes seen as a part of the artificial intelligence field or the computer science field in general.

Physics

is another field that is closely related to computer vision. Computer vision systems rely on image sensors, which detect electromagnetic radiation which is typically in the form of either visible or infra-red light. The sensors are designed using solid-state physics

. The process by which light interacts with surfaces is explained using physics. Physics explains the behavior of optics

which are a core part of most imaging systems. Sophisticated image sensors even require quantum mechanics to provide a complete understanding of the image formation process. Also, various measurement problems in physics can be addressed using computer vision, for example motion in fluids.

A third field which plays an important role is neurobiology, specifically the study of the biological vision system. Over the last century, there has been an extensive study of eyes, neurons, and the brain structures devoted to processing of visual stimuli in both humans and various animals. This has led to a coarse, yet complicated, description of how "real" vision systems operate in order to solve certain vision related tasks. These results have led to a subfield within computer vision where artificial systems are designed to mimic the processing and behavior of biological systems, at different levels of complexity. Also, some of the learning-based methods developed within computer vision (e.g. neural net

based image and feature analysis and classification) have their background in biology.

Some strands of computer vision research are closely related to the study of biological vision - indeed, just as many strands of AI research are closely tied with research into human consciousness, and the use of stored knowledge to interpret, integrate and utilize visual information. The field of biological vision studies and models the physiological processes behind visual perception in humans and other animals. Computer vision, on the other hand, studies and describes the processes implemented in software and hardware behind artificial vision systems. Interdisciplinary exchange between biological and computer vision has proven fruitful for both fields.

Yet another field related to computer vision is signal processing

. Many methods for processing of one-variable signals, typically temporal signals, can be extended in a natural way to processing of two-variable signals or multi-variable signals in computer vision. However, because of the specific nature of images there are many methods developed within computer vision which have no counterpart in the processing of one-variable signals. Together with the multi-dimensionality of the signal, this defines a subfield in signal processing as a part of computer vision.

Beside the above mentioned views on computer vision, many of the related research topics can also be studied from a purely mathematical point of view. For example, many methods in computer vision are based on statistics

, optimization

or geometry

. Finally, a significant part of the field is devoted to the implementation aspect of computer vision; how existing methods can be realized in various combinations of software and hardware, or how these methods can be modified in order to gain processing speed without losing too much performance.

The fields most closely related to computer vision are image processing

, image analysis

and machine vision

. There is a significant overlap in the range of techniques and applications that these cover. This implies that the basic techniques that are used and developed in these fields are more or less identical, something which can be interpreted as there is only one field with different names. On the other hand, it appears to be necessary for research groups, scientific journals, conferences and companies to present or market themselves as belonging specifically to one of these fields and, hence, various characterizations which distinguish each of the fields from the others have been presented.

Computer vision is, in some ways, the inverse of computer graphics

. While computer graphics produces image data from 3D models, computer vision often produces 3D models from image data. There is also a trend towards a combination of the two disciplines, e.g., as explored in augmented reality

.

The following characterizations appear relevant but should not be taken as universally accepted:

, X-ray images

, angiography images, ultrasonic images, and tomography images

. An example of information which can be extracted from such image data is detection of tumours, arteriosclerosis

or other malign changes. It can also be measurements of organ dimensions, blood flow, etc. This application area also supports medical research by providing new information, e.g., about the structure of the brain, or about the quality of medical treatments.

A second application area in computer vision is in industry, sometimes called machine vision

, where information is extracted for the purpose of supporting a manufacturing process. One example is quality control where details or final products are being automatically inspected in order to find defects. Another example is measurement of position and orientation of details to be picked up by a robot arm. Machine vision is also heavily used in agricultural process to remove undesirable food stuff from bulk material, a process called optical sorting

.

Military applications are probably one of the largest areas for computer vision. The obvious examples are detection of enemy soldiers or vehicles and missile guidance

. More advanced systems for missile guidance send the missile to an area rather than a specific target, and target selection is made when the missile reaches the area based on locally acquired image data. Modern military concepts, such as "battlefield awareness", imply that various sensors, including image sensors, provide a rich set of information about a combat scene which can be used to support strategic decisions. In this case, automatic processing of the data is used to reduce complexity and to fuse information from multiple sensors to increase reliability.

One of the newer application areas is autonomous vehicles, which include submersible

One of the newer application areas is autonomous vehicles, which include submersible

s, land-based vehicles (small robots with wheels, cars or trucks), aerial vehicles, and unmanned aerial vehicles (UAV

). The level of autonomy ranges from fully autonomous (unmanned) vehicles to vehicles where computer vision based systems support a driver or a pilot in various situations. Fully autonomous vehicles typically use computer vision for navigation, i.e. for knowing where it is, or for producing a map of its environment (SLAM

) and for detecting obstacles. It can also be used for detecting certain task specific events, e. g., a UAV looking for forest fires. Examples of supporting systems are obstacle warning systems in cars, and systems for autonomous landing of aircraft. Several car manufacturers have demonstrated systems for autonomous driving of cars

, but this technology has still not reached a level where it can be put on the market. There are ample examples of military autonomous vehicles ranging from advanced missiles, to UAVs for recon missions or missile guidance. Space exploration is already being made with autonomous vehicles using computer vision, e. g., NASA's Mars Exploration Rover

and ESA's ExoMars

Rover.

Other application areas include:

of the object relative to the camera.

Different varieties of the recognition problem are described in the literature:

Several specialized tasks based on recognition exist, such as:

An example in this field is the inpainting

.

For 3D volume recognition, the 2D pre-processing steps may be applied first (noise reduction, contrast enhancement, etc.), because initially the data may be in a 2D format from multiple cameras or images, and the 3D data is extracted from the 2D images using parallax

For 3D volume recognition, the 2D pre-processing steps may be applied first (noise reduction, contrast enhancement, etc.), because initially the data may be in a 2D format from multiple cameras or images, and the 3D data is extracted from the 2D images using parallax

depth perception.

Generally a 3D volume cannot be easily reconstructed from a single 2D image, because 2D depth analysis of a single image requires a great deal of secondary knowledge about shapes and shadows, and what constitutes separate objects or simply different parts of the same object. But a single 2D camera can be used to do 3D depth analysis if several images can be captured from different positions in space and parallax analysis is done between the different images.

3D volume recognition from only two 2D images in parallax results in a distorted 2.5D plane with depth information, with incomplete backsides to the object field. Transitions from near and far objects are sudden but continuous. Areas of shadow or washout, or where a shape does not appear in both images, results in a null space of no depth information.

The rear profile of shape impressions in this 2.5D plane can sometimes be inferred from the incomplete front sides, using a database of known 3D shape profiles. The shape recognition process is faster if certain assumptions can be made about the images to be recognized; for example, a warship radar which generates a 2.5D depth map of friend/foe objects on the surrounding ocean only needs to identify shapes known to represent other ships in an upright position. It does not need to consider shapes where the ships are laying sideways, upside down, or at an unusually high-pitched angle out of the water.

The volumetric detail of the back sides of objects can be increased by using many more parallax image sets of the scene from different angles and locations, and comparing each 2.5D volume against previously analyzed 2.5D volumes to find shape/angle overlap between the volumes. 3D parallax recognition accuracy can be higher and faster if the different 2D images are captured from known specific source positions and angles.

Applications range from tasks such as industrial machine vision

Machine vision

Machine vision is the process of applying a range of technologies and methods to provide imaging-based automatic inspection, process control and robot guidance in industrial applications. While the scope of MV is broad and a comprehensive definition is difficult to distil, a "generally accepted...

systems which, say, inspect bottles speeding by on a production line, to research into artificial intelligence and computers or robots that can comprehend the world around them. The computer vision and machine vision fields have significant overlap. Computer vision covers the core technology of automated image analysis which is used in many fields. Machine vision usually refers to a process of combining automated image analysis with other methods and technologies to provide automated inspection and robot guidance in industrial applications.

As a scientific discipline, computer vision is concerned with the theory behind artificial systems that extract information from images. The image data can take many forms, such as video sequences, views from multiple cameras, or multi-dimensional data from a medical scanner.

As a technological discipline, computer vision seeks to apply its theories and models to the construction of computer vision systems. Examples of applications of computer vision include systems for:

- Controlling processes (e.g., an industrial robot).

- Navigation (e.g. by an autonomous vehicle or mobile robot).

- Detecting events (e.g., for visual surveillance or people countingPeople counterA people counter is a device used to measure the number and direction of people traversing a certain passage or entrance per unit time. The resolution of the measurement is entirely dependent on the sophistication of the technology employed. The device is often used at the entrance of a building so...

). - Organizing information (e.g., for indexing databases of images and image sequences).

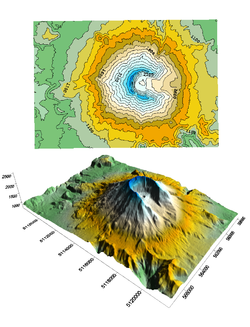

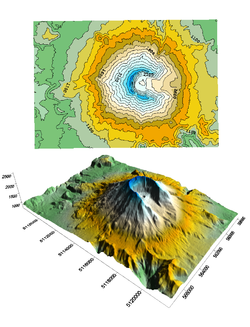

- Modeling objects or environments (e.g., medical image analysis or topographical modeling).

- Interaction (e.g., as the input to a device for computer-human interaction).

- Automatic inspection, e.g. in manufacturing applications

Sub-domains of computer vision include scene reconstruction, event detection, video tracking

Video tracking

Video tracking is the process of locating a moving object over time using a camera. It has a variety of uses, some of which are: human-computer interaction, security and surveillance, video communication and compression, augmented reality, traffic control, medical imaging and video editing...

, object recognition

Object recognition

Object recognition in computer vision is the task of finding a given object in an image or video sequence. Humans recognize a multitude of objects in images with little effort, despite the fact that the image of the objects may vary somewhat in different view points, in many different sizes / scale...

, learning, indexing, motion estimation

Motion estimation

Motion estimation is the process of determining motion vectors that describe the transformation from one 2D image to another; usually from adjacent frames in a video sequence. It is an ill-posed problem as the motion is in three dimensions but the images are a projection of the 3D scene onto a 2D...

, and image restoration

Image restoration

Image restoratio is The operation of taking a corrupted/noisy image and estimating the clean original image. Corruption may come in many forms such as motion blur, noise, and camera misfocus....

.

In most practical computer vision applications, the computers are pre-programmed to solve a particular task, but methods based on learning are now becoming increasingly common.

Related fields

Areas of artificial intelligenceArtificial intelligence

Artificial intelligence is the intelligence of machines and the branch of computer science that aims to create it. AI textbooks define the field as "the study and design of intelligent agents" where an intelligent agent is a system that perceives its environment and takes actions that maximize its...

deal with autonomous planning or deliberation for robotical systems to navigate through an environment. A detailed understanding of these environments is required to navigate through them. Information about the environment could be provided by a computer vision system, acting as a vision sensor and providing high-level information about the environment and the robot.

Artificial intelligence and computer vision share other topics such as pattern recognition

Pattern recognition

In machine learning, pattern recognition is the assignment of some sort of output value to a given input value , according to some specific algorithm. An example of pattern recognition is classification, which attempts to assign each input value to one of a given set of classes...

and learning techniques. Consequently, computer vision is sometimes seen as a part of the artificial intelligence field or the computer science field in general.

Physics

Physics

Physics is a natural science that involves the study of matter and its motion through spacetime, along with related concepts such as energy and force. More broadly, it is the general analysis of nature, conducted in order to understand how the universe behaves.Physics is one of the oldest academic...

is another field that is closely related to computer vision. Computer vision systems rely on image sensors, which detect electromagnetic radiation which is typically in the form of either visible or infra-red light. The sensors are designed using solid-state physics

Solid-state physics

Solid-state physics is the study of rigid matter, or solids, through methods such as quantum mechanics, crystallography, electromagnetism, and metallurgy. It is the largest branch of condensed matter physics. Solid-state physics studies how the large-scale properties of solid materials result from...

. The process by which light interacts with surfaces is explained using physics. Physics explains the behavior of optics

Optics

Optics is the branch of physics which involves the behavior and properties of light, including its interactions with matter and the construction of instruments that use or detect it. Optics usually describes the behavior of visible, ultraviolet, and infrared light...

which are a core part of most imaging systems. Sophisticated image sensors even require quantum mechanics to provide a complete understanding of the image formation process. Also, various measurement problems in physics can be addressed using computer vision, for example motion in fluids.

A third field which plays an important role is neurobiology, specifically the study of the biological vision system. Over the last century, there has been an extensive study of eyes, neurons, and the brain structures devoted to processing of visual stimuli in both humans and various animals. This has led to a coarse, yet complicated, description of how "real" vision systems operate in order to solve certain vision related tasks. These results have led to a subfield within computer vision where artificial systems are designed to mimic the processing and behavior of biological systems, at different levels of complexity. Also, some of the learning-based methods developed within computer vision (e.g. neural net

Neural network

The term neural network was traditionally used to refer to a network or circuit of biological neurons. The modern usage of the term often refers to artificial neural networks, which are composed of artificial neurons or nodes...

based image and feature analysis and classification) have their background in biology.

Some strands of computer vision research are closely related to the study of biological vision - indeed, just as many strands of AI research are closely tied with research into human consciousness, and the use of stored knowledge to interpret, integrate and utilize visual information. The field of biological vision studies and models the physiological processes behind visual perception in humans and other animals. Computer vision, on the other hand, studies and describes the processes implemented in software and hardware behind artificial vision systems. Interdisciplinary exchange between biological and computer vision has proven fruitful for both fields.

Yet another field related to computer vision is signal processing

Signal processing

Signal processing is an area of systems engineering, electrical engineering and applied mathematics that deals with operations on or analysis of signals, in either discrete or continuous time...

. Many methods for processing of one-variable signals, typically temporal signals, can be extended in a natural way to processing of two-variable signals or multi-variable signals in computer vision. However, because of the specific nature of images there are many methods developed within computer vision which have no counterpart in the processing of one-variable signals. Together with the multi-dimensionality of the signal, this defines a subfield in signal processing as a part of computer vision.

Beside the above mentioned views on computer vision, many of the related research topics can also be studied from a purely mathematical point of view. For example, many methods in computer vision are based on statistics

Statistics

Statistics is the study of the collection, organization, analysis, and interpretation of data. It deals with all aspects of this, including the planning of data collection in terms of the design of surveys and experiments....

, optimization

Optimization (mathematics)

In mathematics, computational science, or management science, mathematical optimization refers to the selection of a best element from some set of available alternatives....

or geometry

Geometry

Geometry arose as the field of knowledge dealing with spatial relationships. Geometry was one of the two fields of pre-modern mathematics, the other being the study of numbers ....

. Finally, a significant part of the field is devoted to the implementation aspect of computer vision; how existing methods can be realized in various combinations of software and hardware, or how these methods can be modified in order to gain processing speed without losing too much performance.

The fields most closely related to computer vision are image processing

Image processing

In electrical engineering and computer science, image processing is any form of signal processing for which the input is an image, such as a photograph or video frame; the output of image processing may be either an image or, a set of characteristics or parameters related to the image...

, image analysis

Image analysis

Image analysis is the extraction of meaningful information from images; mainly from digital images by means of digital image processing techniques...

and machine vision

Machine vision

Machine vision is the process of applying a range of technologies and methods to provide imaging-based automatic inspection, process control and robot guidance in industrial applications. While the scope of MV is broad and a comprehensive definition is difficult to distil, a "generally accepted...

. There is a significant overlap in the range of techniques and applications that these cover. This implies that the basic techniques that are used and developed in these fields are more or less identical, something which can be interpreted as there is only one field with different names. On the other hand, it appears to be necessary for research groups, scientific journals, conferences and companies to present or market themselves as belonging specifically to one of these fields and, hence, various characterizations which distinguish each of the fields from the others have been presented.

Computer vision is, in some ways, the inverse of computer graphics

Computer graphics

Computer graphics are graphics created using computers and, more generally, the representation and manipulation of image data by a computer with help from specialized software and hardware....

. While computer graphics produces image data from 3D models, computer vision often produces 3D models from image data. There is also a trend towards a combination of the two disciplines, e.g., as explored in augmented reality

Augmented reality

Augmented reality is a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data. It is related to a more general concept called mediated reality, in which a view of reality is...

.

The following characterizations appear relevant but should not be taken as universally accepted:

- Image processingImage processingIn electrical engineering and computer science, image processing is any form of signal processing for which the input is an image, such as a photograph or video frame; the output of image processing may be either an image or, a set of characteristics or parameters related to the image...

and image analysisImage analysisImage analysis is the extraction of meaningful information from images; mainly from digital images by means of digital image processing techniques...

tend to focus on 2D images, how to transform one image to another, e.g., by pixel-wise operations such as contrast enhancement, local operations such as edge extraction or noise removal, or geometrical transformations such as rotating the image. This characterization implies that image processing/analysis neither require assumptions nor produce interpretations about the image content. - Computer vision includes 3D analysis from 2D images. This analyzes the 3D scene projected onto one or several images, e.g., how to reconstruct structure or other information about the 3D scene from one or several images. Computer vision often relies on more or less complex assumptions about the scene depicted in an image.

- Machine visionMachine visionMachine vision is the process of applying a range of technologies and methods to provide imaging-based automatic inspection, process control and robot guidance in industrial applications. While the scope of MV is broad and a comprehensive definition is difficult to distil, a "generally accepted...

is the process of applying a range of technologies & methods to provide imaging-based automatic inspection, process control and robot guidance in industrial applications. Machine vision tends to focus on applications, mainly in manufacturing, e.g., vision based autonomous robots and systems for vision based inspection or measurement. This implies that image sensor technologies and control theory often are integrated with the processing of image data to control a robot and that real-time processing is emphasised by means of efficient implementations in hardware and software. It also implies that the external conditions such as lighting can be and are often more controlled in machine vision than they are in general computer vision, which can enable the use of different algorithms. - There is also a field called imagingImaging scienceImaging science is a multidisciplinary field concerned with the generation, collection, duplication, analysis, modification, and visualization of images . As an evolving field it includes research and researchers from physics, mathematics, electrical engineering, computer vision, computer science,...

which primarily focus on the process of producing images, but sometimes also deals with processing and analysis of images. For example, medical imagingMedical imagingMedical imaging is the technique and process used to create images of the human body for clinical purposes or medical science...

includes substantial work on the analysis of image data in medical applications. - Finally, pattern recognitionPattern recognitionIn machine learning, pattern recognition is the assignment of some sort of output value to a given input value , according to some specific algorithm. An example of pattern recognition is classification, which attempts to assign each input value to one of a given set of classes...

is a field which uses various methods to extract information from signals in general, mainly based on statistical approaches. A significant part of this field is devoted to applying these methods to image data.

Applications for computer vision

One of the most prominent application fields is medical computer vision or medical image processing. This area is characterized by the extraction of information from image data for the purpose of making a medical diagnosis of a patient. Generally, image data is in the form of microscopy imagesMicroscopy

Microscopy is the technical field of using microscopes to view samples and objects that cannot be seen with the unaided eye...

, X-ray images

X-ray

X-radiation is a form of electromagnetic radiation. X-rays have a wavelength in the range of 0.01 to 10 nanometers, corresponding to frequencies in the range 30 petahertz to 30 exahertz and energies in the range 120 eV to 120 keV. They are shorter in wavelength than UV rays and longer than gamma...

, angiography images, ultrasonic images, and tomography images

Tomography

Tomography refers to imaging by sections or sectioning, through the use of any kind of penetrating wave. A device used in tomography is called a tomograph, while the image produced is a tomogram. The method is used in radiology, archaeology, biology, geophysics, oceanography, materials science,...

. An example of information which can be extracted from such image data is detection of tumours, arteriosclerosis

Arteriosclerosis

Arteriosclerosis refers to a stiffening of arteries.Arteriosclerosis is a general term describing any hardening of medium or large arteries It should not be confused with "arteriolosclerosis" or "atherosclerosis".Also known by the name "myoconditis" which is...

or other malign changes. It can also be measurements of organ dimensions, blood flow, etc. This application area also supports medical research by providing new information, e.g., about the structure of the brain, or about the quality of medical treatments.

A second application area in computer vision is in industry, sometimes called machine vision

Machine vision

Machine vision is the process of applying a range of technologies and methods to provide imaging-based automatic inspection, process control and robot guidance in industrial applications. While the scope of MV is broad and a comprehensive definition is difficult to distil, a "generally accepted...

, where information is extracted for the purpose of supporting a manufacturing process. One example is quality control where details or final products are being automatically inspected in order to find defects. Another example is measurement of position and orientation of details to be picked up by a robot arm. Machine vision is also heavily used in agricultural process to remove undesirable food stuff from bulk material, a process called optical sorting

Optical sorting

Optical Sorting is a process of visually sorting a product though the use of Photodetector , Camera, or the Human eye.In its simplest operation, a machine will simply see how much light is reflected off the object using a simple Photodetector and accept or reject the item depending on how...

.

Military applications are probably one of the largest areas for computer vision. The obvious examples are detection of enemy soldiers or vehicles and missile guidance

Missile guidance

Missile guidance refers to a variety of methods of guiding a missile or a guided bomb to its intended target. The missile's target accuracy is a critical factor for its effectiveness...

. More advanced systems for missile guidance send the missile to an area rather than a specific target, and target selection is made when the missile reaches the area based on locally acquired image data. Modern military concepts, such as "battlefield awareness", imply that various sensors, including image sensors, provide a rich set of information about a combat scene which can be used to support strategic decisions. In this case, automatic processing of the data is used to reduce complexity and to fuse information from multiple sensors to increase reliability.

Submersible

A submersible is a small vehicle designed to operate underwater. The term submersible is often used to differentiate from other underwater vehicles known as submarines, in that a submarine is a fully autonomous craft, capable of renewing its own power and breathing air, whereas a submersible is...

s, land-based vehicles (small robots with wheels, cars or trucks), aerial vehicles, and unmanned aerial vehicles (UAV

Unmanned aerial vehicle

An unmanned aerial vehicle , also known as a unmanned aircraft system , remotely piloted aircraft or unmanned aircraft, is a machine which functions either by the remote control of a navigator or pilot or autonomously, that is, as a self-directing entity...

). The level of autonomy ranges from fully autonomous (unmanned) vehicles to vehicles where computer vision based systems support a driver or a pilot in various situations. Fully autonomous vehicles typically use computer vision for navigation, i.e. for knowing where it is, or for producing a map of its environment (SLAM

Simultaneous localization and mapping

Simultaneous localization and mapping is a technique used by robots and autonomous vehicles to build up a map within an unknown environment , or to update a map within a known environment , while at the same time keeping track of their current location.- Operational definition :Maps are used...

) and for detecting obstacles. It can also be used for detecting certain task specific events, e. g., a UAV looking for forest fires. Examples of supporting systems are obstacle warning systems in cars, and systems for autonomous landing of aircraft. Several car manufacturers have demonstrated systems for autonomous driving of cars

Driverless car

An autonomous car, also known as robotic or informally as driverless, is an autonomous vehicle capable of fulfilling the human transportation capabilities of a traditional car. As an autonomous vehicle, it is capable of sensing its environment and navigating on its own...

, but this technology has still not reached a level where it can be put on the market. There are ample examples of military autonomous vehicles ranging from advanced missiles, to UAVs for recon missions or missile guidance. Space exploration is already being made with autonomous vehicles using computer vision, e. g., NASA's Mars Exploration Rover

Mars Exploration Rover

NASA's Mars Exploration Rover Mission is an ongoing robotic space mission involving two rovers, Spirit and Opportunity, exploring the planet Mars...

and ESA's ExoMars

ExoMars

ExoMars is a European-led robotic mission to Mars currently under development by the European Space Agency with collaboration by NASA...

Rover.

Other application areas include:

- Support of visual effectsVisual effectsVisual effects are the various processes by which imagery is created and/or manipulated outside the context of a live action shoot. Visual effects involve the integration of live-action footage and generated imagery to create environments which look realistic, but would be dangerous, costly, or...

creation for cinema and broadcast, e.g., camera tracking (matchmoving). - SurveillanceSurveillanceSurveillance is the monitoring of the behavior, activities, or other changing information, usually of people. It is sometimes done in a surreptitious manner...

.

Typical tasks of computer vision

Each of the application areas described above employ a range of computer vision tasks; more or less well-defined measurement problems or processing problems, which can be solved using a variety of methods. Some examples of typical computer vision tasks are presented below.Recognition

The classical problem in computer vision, image processing, and machine vision is that of determining whether or not the image data contains some specific object, feature, or activity. This task can normally be solved robustly and without effort by a human, but is still not satisfactorily solved in computer vision for the general case: arbitrary objects in arbitrary situations. The existing methods for dealing with this problem can at best solve it only for specific objects, such as simple geometric objects (e.g., polyhedra), human faces, printed or hand-written characters, or vehicles, and in specific situations, typically described in terms of well-defined illumination, background, and posePose (computer vision)

In computer vision and in robotics, a typical task is to identify specific objects in an image and to determine each object's position and orientation relative to some coordinate system. This information can then be used, for example, to allow a robot to manipulate an object or to avoid moving...

of the object relative to the camera.

Different varieties of the recognition problem are described in the literature:

- Object recognitionObject recognitionObject recognition in computer vision is the task of finding a given object in an image or video sequence. Humans recognize a multitude of objects in images with little effort, despite the fact that the image of the objects may vary somewhat in different view points, in many different sizes / scale...

: one or several pre-specified or learned objects or object classes can be recognized, usually together with their 2D positions in the image or 3D poses in the scene. Google GogglesGoogle GogglesGoogle Goggles is a downloadable image recognition application created by Google Inc. which can be currently found on the Mobile Apps page of Google Mobile. It is used for searches based on pictures taken by handheld devices...

provides a stand-alone program illustration of this function. - Identification: An individual instance of an object is recognized. Examples: identification of a specific person's face or fingerprint, or identification of a specific vehicle.

- Detection: the image data is scanned for a specific condition. Examples: detection of possible abnormal cells or tissues in medical images or detection of a vehicle in an automatic road toll system. Detection based on relatively simple and fast computations is sometimes used for finding smaller regions of interesting image data which can be further analyzed by more computationally demanding techniques to produce a correct interpretation.

Several specialized tasks based on recognition exist, such as:

- Content-based image retrievalContent-based image retrievalContent-based image retrieval , also known as query by image content and content-based visual information retrieval is the application of computer vision techniques to the image retrieval problem, that is, the problem of searching for digital images in large databases....

: finding all images in a larger set of images which have a specific content. The content can be specified in different ways, for example in terms of similarity relative a target image (give me all images similar to image X), or in terms of high-level search criteria given as text input (give me all images which contains many houses, are taken during winter, and have no cars in them). - Pose estimationPose (computer vision)In computer vision and in robotics, a typical task is to identify specific objects in an image and to determine each object's position and orientation relative to some coordinate system. This information can then be used, for example, to allow a robot to manipulate an object or to avoid moving...

: estimating the position or orientation of a specific object relative to the camera. An example application for this technique would be assisting a robot arm in retrieving objects from a conveyor belt in an assembly lineAssembly lineAn assembly line is a manufacturing process in which parts are added to a product in a sequential manner using optimally planned logistics to create a finished product much faster than with handcrafting-type methods...

situation or picking parts from a bin. - Optical character recognitionOptical character recognitionOptical character recognition, usually abbreviated to OCR, is the mechanical or electronic translation of scanned images of handwritten, typewritten or printed text into machine-encoded text. It is widely used to convert books and documents into electronic files, to computerize a record-keeping...

(OCR): identifying charactersCharacter (computing)In computer and machine-based telecommunications terminology, a character is a unit of information that roughly corresponds to a grapheme, grapheme-like unit, or symbol, such as in an alphabet or syllabary in the written form of a natural language....

in images of printed or handwritten text, usually with a view to encoding the text in a format more amenable to editing or indexing (e.g. ASCIIASCIIThe American Standard Code for Information Interchange is a character-encoding scheme based on the ordering of the English alphabet. ASCII codes represent text in computers, communications equipment, and other devices that use text...

). - 2D Code reading Reading of 2D codes such as data matrixData MatrixA Data Matrix code is a two-dimensional matrix barcode consisting of black and white "cells" or modules arranged in either a square or rectangular pattern. The information to be encoded can be text or raw data. Usual data size is from a few bytes up to 1556 bytes. The length of the encoded data...

and QRQR codeA QR code is a type of matrix barcode first designed for the automotive industry. More recently, the system has become popular outside of the industry due to its fast readability and comparatively large storage capacity. The code consists of black modules arranged in a square pattern on a white...

codes. - Facial recognitionFacial recognition systemA facial recognition system is a computer application for automatically identifying or verifying a person from a digital image or a video frame from a video source...

Motion analysis

Several tasks relate to motion estimation where an image sequence is processed to produce an estimate of the velocity either at each points in the image or in the 3D scene, or even of the camera that produces the images . Examples of such tasks are:- EgomotionEgomotionEgomotion is defined as the 3D motion of a camera within an environment. In the field of computer vision, egomotion refers to estimating a camera's motion relative to a rigid scene. An example of egomotion estimation would be estimating a car's moving position relative to lines on the road or...

: determining the 3D rigid motion (rotation and translation) of the camera from an image sequence produced by the camera. - TrackingVideo trackingVideo tracking is the process of locating a moving object over time using a camera. It has a variety of uses, some of which are: human-computer interaction, security and surveillance, video communication and compression, augmented reality, traffic control, medical imaging and video editing...

: following the movements of a (usually) smaller set of interest points or objects (e.g., vehicles or humans) in the image sequence. - Optical flowOptical flowOptical flow or optic flow is the pattern of apparent motion of objects, surfaces, and edges in a visual scene caused by the relative motion between an observer and the scene. The concept of optical flow was first studied in the 1940s and ultimately published by American psychologist James J....

: to determine, for each point in the image, how that point is moving relative to the image plane, i.e., its apparent motion. This motion is a result both of how the corresponding 3D point is moving in the scene and how the camera is moving relative to the scene.

Scene reconstruction

Given one or (typically) more images of a scene, or a video, scene reconstruction aims at computing a 3D model of the scene. In the simplest case the model can be a set of 3D points. More sophisticated methods produce a complete 3D surface model.Image restoration

The aim of image restoration is the removal of noise (sensor noise, motion blur, etc.) from images. The simplest possible approach for noise removal is various types of filters such as low-pass filters or median filters. More sophisticated methods assume a model of how the local image structures look like, a model which distinguishes them from the noise. By first analysing the image data in terms of the local image structures, such as lines or edges, and then controlling the filtering based on local information from the analysis step, a better level of noise removal is usually obtained compared to the simpler approaches.An example in this field is the inpainting

Inpainting

Inpainting is the process of reconstructing lost or deteriorated parts of images and videos. For instance, in the case of a valuable painting, this task would be carried out by a skilled image restoration artist...

.

3D volume recognition

Parallax

Parallax is a displacement or difference in the apparent position of an object viewed along two different lines of sight, and is measured by the angle or semi-angle of inclination between those two lines. The term is derived from the Greek παράλλαξις , meaning "alteration"...

depth perception.

Generally a 3D volume cannot be easily reconstructed from a single 2D image, because 2D depth analysis of a single image requires a great deal of secondary knowledge about shapes and shadows, and what constitutes separate objects or simply different parts of the same object. But a single 2D camera can be used to do 3D depth analysis if several images can be captured from different positions in space and parallax analysis is done between the different images.

3D volume recognition from only two 2D images in parallax results in a distorted 2.5D plane with depth information, with incomplete backsides to the object field. Transitions from near and far objects are sudden but continuous. Areas of shadow or washout, or where a shape does not appear in both images, results in a null space of no depth information.

The rear profile of shape impressions in this 2.5D plane can sometimes be inferred from the incomplete front sides, using a database of known 3D shape profiles. The shape recognition process is faster if certain assumptions can be made about the images to be recognized; for example, a warship radar which generates a 2.5D depth map of friend/foe objects on the surrounding ocean only needs to identify shapes known to represent other ships in an upright position. It does not need to consider shapes where the ships are laying sideways, upside down, or at an unusually high-pitched angle out of the water.

The volumetric detail of the back sides of objects can be increased by using many more parallax image sets of the scene from different angles and locations, and comparing each 2.5D volume against previously analyzed 2.5D volumes to find shape/angle overlap between the volumes. 3D parallax recognition accuracy can be higher and faster if the different 2D images are captured from known specific source positions and angles.

Computer vision system methods

The organization of a computer vision system is highly application dependent. Some systems are stand-alone applications which solve a specific measurement or detection problem, while others constitute a sub-system of a larger design which, for example, also contains sub-systems for control of mechanical actuators, planning, information databases, man-machine interfaces, etc. The specific implementation of a computer vision system also depends on if its functionality is pre-specified or if some part of it can be learned or modified during operation. Many functions are unique to the application. There are, however, typical functions which are found in many computer vision systems.- Image acquisition: A digital image is produced by one or several image sensorImage sensorAn image sensor is a device that converts an optical image into an electronic signal. It is used mostly in digital cameras and other imaging devices...

s, which, besides various types of light-sensitive cameras, include range sensors, tomography devices, radar, ultra-sonic cameras, etc. Depending on the type of sensor, the resulting image data is an ordinary 2D image, a 3D volume, or an image sequence. The pixel values typically correspond to light intensity in one or several spectral bands (gray images or colour images), but can also be related to various physical measures, such as depth, absorption or reflectance of sonic or electromagnetic waves, or nuclear magnetic resonanceMagnetic resonance imagingMagnetic resonance imaging , nuclear magnetic resonance imaging , or magnetic resonance tomography is a medical imaging technique used in radiology to visualize detailed internal structures...

. - Pre-processing: Before a computer vision method can be applied to image data in order to extract some specific piece of information, it is usually necessary to process the data in order to assure that it satisfies certain assumptions implied by the method. Examples are

- Re-sampling in order to assure that the image coordinate system is correct.

- Noise reduction in order to assure that sensor noise does not introduce false information.

- Contrast enhancement to assure that relevant information can be detected.

- Scale-space representation to enhance image structures at locally appropriate scales.

- Feature extraction: Image features at various levels of complexity are extracted from the image data. Typical examples of such features are

- Lines, edgesEdge detectionEdge detection is a fundamental tool in image processing and computer vision, particularly in the areas of feature detection and feature extraction, which aim at identifying points in a digital image at which the image brightness changes sharply or, more formally, has discontinuities...

and ridgesRidge detectionThe ridges of a smooth function of two variables is a set of curves whose points are, in one or more ways to be made precise below, local maxima of the function in at least one dimension. For a function of N variables, its ridges are a set of curves whose points are local maxima in N-1 dimensions...

. - Localized interest pointsInterest point detectionInterest point detection is a recent terminology in computer vision that refers to the detection of interest points for subsequent processing...

such as cornersCorner detectionCorner detection is an approach used within computer vision systems to extract certain kinds of features and infer the contents of an image. Corner detection is frequently used in motion detection, image registration, video tracking, image mosaicing, panorama stitching, 3D modelling and object...

, blobsBlob detectionIn the area of computer vision, blob detection refers to visual modules that are aimed at detecting points and/or regions in the image that differ in properties like brightness or color compared to the surrounding...

or points.

- Lines, edges

- More complex features may be related to texture, shape or motion.

- Detection/segmentation: At some point in the processing a decision is made about which image points or regions of the image are relevant for further processing. Examples are

- Selection of a specific set of interest points

- Segmentation of one or multiple image regions which contain a specific object of interest.

- High-level processing: At this step the input is typically a small set of data, for example a set of points or an image region which is assumed to contain a specific object. The remaining processing deals with, for example:

- Verification that the data satisfy model-based and application specific assumptions.

- Estimation of application specific parameters, such as object pose or object size.

- Image recognition: classifying a detected object into different categories.

- Image registrationImage registrationImage registration is the process of transforming different sets of data into one coordinate system. Data may be multiple photographs, data from different sensors, from different times, or from different viewpoints. It is used in computer vision, medical imaging, military automatic target...

: comparing and combining two different views of the same object.

- Decision making Making the final decision required for the application, for example:

- Pass/fail on automatic inspection applications

- Match / no-match in recognition applications

- Flag for further human review in medical, military, security and recognition applications

- Detection/segmentation: At some point in the processing a decision is made about which image points or regions of the image are relevant for further processing. Examples are

External links

- Computer Vision Online A good source for source codes, software packages, datasets, etc. related to computer vision

- Keith Price's Annotated Computer Vision Bibliography and the Official Mirror Site Keith Price's Annotated Computer Vision Bibliography

- USC Iris computer vision conference list

- CVonline Bob Fisher's Compendium of Computer Vision

- HIPR2 HIPR2 Image Processing Learning Resources, including Java demos

- SimpleCV Open source cross platform python framework for computer vision

- by NVIDIA (from Select a Topic list choose: Computer Vision and press GO)